Bullets:

The economic model for the AI industry brought to us by Wall Street and Silicon Valley is falling apart, with subscription fees paid by users which are far below the companies’ cost of compute.

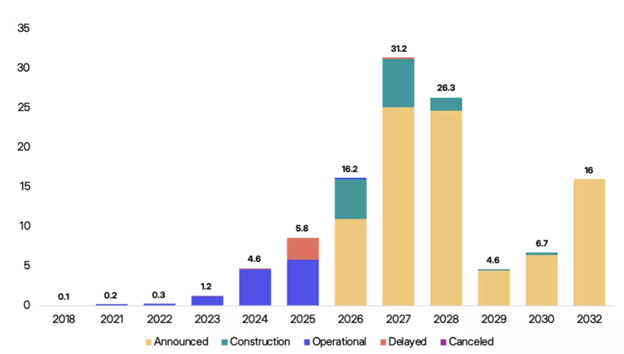

The companies are also facing severe blowback for new data center construction almost everywhere, and constraints on power grids and capital budgets have delayed dozens of projects.

Top managers of Silicon Valley AI companies are also, finally, facing harsh public scrutiny, particularly after the disastrous opening days of the War on Iran.

The Artificial Intelligence industry is comprised of five layers: energy, chips, infrastructure, models, and applications.

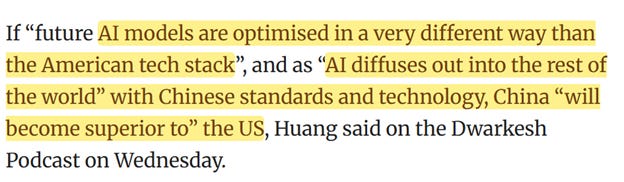

Now that Chinese Large Language Models are optimized to run on Chinese-built chips, are are soaring in popularity across the world by enterprise users, even top US industry insiders admit that Chinese tech will dominate going forward.

Inside China / Business is a reader-supported publication. To receive new posts and support my work, consider becoming a free or paid subscriber.

Report:

Good morning.

My team is a heavy user of translation software in China, and it is powered by AI systems which are very good. Several years ago a friend of mine was working with the Beta version of one of the newest models, and we sat down together and tested the software.

I was very impressed that it was able to handle, easily, native American English idioms, such as from baseball or football. I used the terms “Hail Mary”, and “out of left field” in conversation, and it was no problem. Then we got a chemistry journal, and I read a long paragraph into the translation software, which he then read back to me, so it would translate his Chinese into my English. We selected that passage because neither of us were chemists, and probably were mispronouncing the words. The word order was changed, slightly, but otherwise it was perfect.

I then took an article from ZeroHedge, about the market internals from a recent Treasury bond auction. It was very high-level finance, and the translator again, back-and-forth, was perfect.

A few months later, my group was in Guangdong, meeting with engineers at a factory that builds manufactured housing. This factory group builds small homes and buildings for dozens of countries across the world, and I was asking if they could design for the US market. I asked them about Southwest house designs. They ran it through their system, and in a few minutes had generated plans and rough price estimates. Then I did the same for ranch-style, contemporary, and cabana houses that are popular in resort areas. Each time, the AI software generated comprehensive designs and prices.

We were getting excited, because we can open vast new markets in North America and Europe, using these factories. Then I moved to the northern part of the country, and asked about Craftsman homes, and Cape Cods, which are very popular in the Northeast.

Their software generated designs and plans, along with costs, but their engineers refused to build those. They didn’t like the different roof pitches and dormers, and especially disliked the chimneys and fireplaces. They had no experience building anything like them, and refused to do so even though their software was telling them how.

Later I began to notice some issues with the translators, which are used by everybody now. For example, when I said, “Let’s deal with that problem next year”, the AI translates that exactly, and the Chinese group wrote down the date. But that isn’t what I meant. The meaning of that phrase would depend on on how the English speaker says it. Usually, we would mean that we don’t want to think about that problem right now; we have other priorities, and let’s come back to it later. But the AI runs off of probabilistic models, and will generate an answer based on what the speaker MOST LIKELY is trying to say.

And there were problems with time constructions. In the example, “we need to fix that in the next year”, the software said that it is one year from today—this date, next year. Maybe I mean that, but I may mean that it needs fixing before the end of the next year, which is 2027.

A professional translator—a person—would stop the conversation and ask me to be specific, because it could be translated in different ways. And a true “intelligent agent” software system should do that, too.

I learned that I need to be more careful, than ever, especially when we are discussing time, and money. We are impressed that these systems can handle obscure idioms, from both Chinese and English. But we are concerned that they throw off confusing translations for important issues in scheduling and budgeting.

The human element is everything. They are very powerful and effective tools, in the hands of people who know how to use them properly, and understand that what appears on our screens need to be carefully watched, and overruled.

These tools are used by everyone in China, in business and industry. They are ubiquitous in manufacturing, engineering, academia, medicine, law, and accounting and finance. But we are a long way from having the machines do the thinking or work that can replace deeply experienced people.

But that is not what was promised, by the Silicon Valley AI hyperscalers and AI giants. To illustrate that issue, consider the differences between how AI is being widely used here in China, compared to the United States, compared to the applications developed here in China. More to the point, what we thought we were supposed to have, compared to what we got.

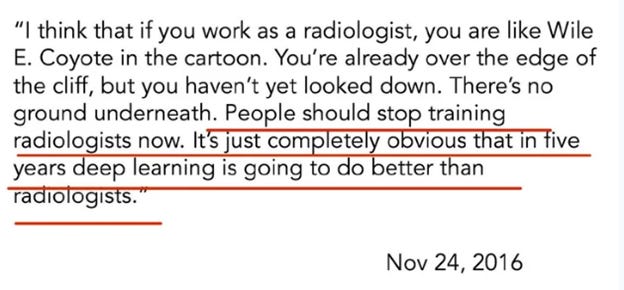

Geoffrey Hinton won the Nobel Prize for Physics, for pioneering work in machine learning and artificial neural networks. The “Godfather of AI” is how he became known, and gave a speech in 2016 in which he predicted that AI would be so transformative in radiology, that it is a waste of time to even study it anymore. “People should stop training radiologists” right now. It’s “completely obvious that in five years deep learning” will be “better than radiologists.”

That prediction aged badly, it turns out. But imagine a kid born in the year 2000, who gets great grades, and who sees that speech from a Nobel Prize winner, and how that might inform future career choices. Should he go to medical school? Or is he better to head off to Wall Street or Silicon Valley instead?

The world doesn’t have enough radiologists, and that problem is particularly acute in China, and in developing countries. Here we have the technology and the machines to generate scans, but a shortage of the people who can read them.

In China, AI is being widely used in radiology, because it enables them to do more work, in less time. It’s an important tool in the hands of people who have top medical school training, and so AI coursework is bundled in at the university level. In medical imaging, AI technologies and the need for efficiency and accuracy in patient diagnosis are closely correlated, particularly in elder care. AI systems are rolling out across China’s hospital systems, first informed by top radiology centers across the country, then the tools given to other doctors—medical experts—in hospitals outside Tier-1 cities.

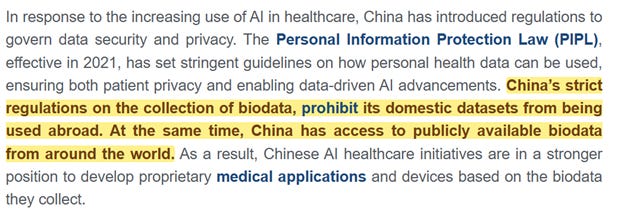

This is an issue we need to remember for later. China considers its citizens’ patient data as highly confidential, a national security issue, even. So it’s unthinkable that Chinese medical data will be shared with the large language models used by Silicon Valley:

The second sentence there, is true though—Chinese researchers do enjoy access to patient datasets from other countries, that are being used by those models.

So in China, and in neighboring countries, AI is being used across medicine to replicate the top advice and quality of diagnostic care that until recently were only available in the top cities. And that’s how it did play out in the United States, too. The radiology industry in the United States is doing just fine, and radiologists are making more money than ever, especially in the big cities. If you’re in an American hospital, you might be getting the top care from radiologists pulling down hundreds of thousands of dollars a year, while using the latest AI tools.

But it’s at the individual and household user level, though, where serious problems are. When the AI is used by normal people—not medical professionals-- the AI simply isn’t good enough, but the users do not know that. Tens of millions of Americans are getting health advice from chatbots, and the results are awful. This is a study that was just published by the Journal of the American Medical Association, which tried to measure the reliability of large-language models used in clinical environments. These 21 models were tested and failed over 80% of the time in cases where more than one medical condition might be present. When the researchers put in physical exam notes and lab results, the failure rate was 40 percent.

One in four US adults already are using OpenAI and the other bots for medical advice, and a lot of that motivation comes from the affordability issue: 41% said they either don’t want to, or cannot, afford to pay the bill. Over 60 million Americans are asking ChatGPT about, among other things, if their medical condition is serious, or if a particular rash is infected, or if the symptoms they have may be communicable disease, and 80% of the time are told the wrong thing. For lay users, the LLM’s hallucinate.

And that goes back to the adult supervision problem, and the question of what AI actually even is. When I use these tools, in my day-to-day life, and in my career, I can’t shake the feeling that I am seeing very, very fast search, bundled with other good software. The manufactured house company was simply running internet searches for Cape Cod houses, and the results were fed into CAD/CAM software. The obscure English idioms from American sports can also be looked up, online, and fed back. CAD/CAM isn’t new, and neither are internet searches.

What is new, is that these tools are on my iPhone, instead of my laptop. So are the heroes for this story the guys at Foxconn and Apple, who’ve put a faster processor and a better microphone in my smartphone? Is it the people at Huawei and ZTE, who have installed 5G everywhere? (These tools work poorly on 4G, or on older versions of electronic devices).

Perhaps most of all, I learned that almost nobody is paying for this technology. We all use it, heavily, but it’s free. That led me to wonder who is making investments of hundreds of billions of dollars, and how they hoped to get their investments back?

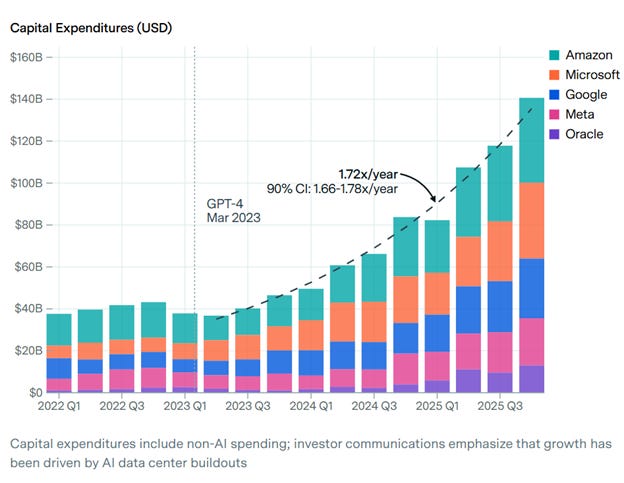

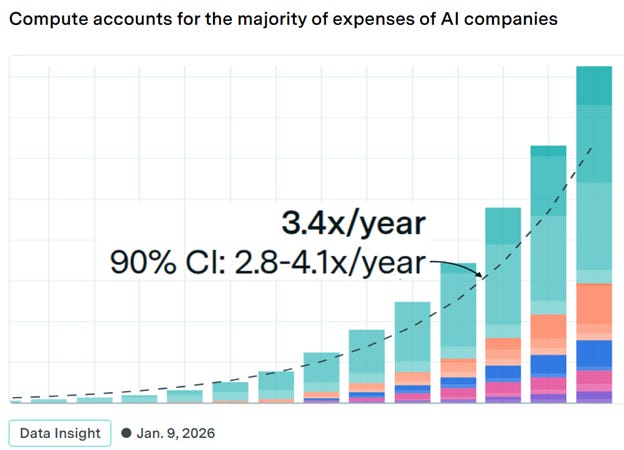

What is the justification for all that CAPEX? Those five companies—Amazon, Microsoft, Google, Meta is Facebook, and Oracle have quadrupled their capital expenditures just since the release of ChatGPT-4.

The biggest rise in their expenses comes from the high costs to run their large language models, which they are not charging users for. That’s to say that every one of those companies is losing money on AI, and fortunately for most of them they have massive operating cash frows from other divisions to cover the bills.

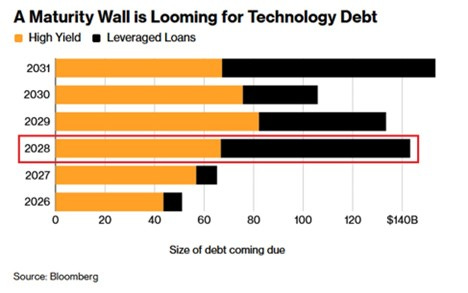

That is not true of Oracle, by the way. They are in a very different place. And today the industry is staring down a tsunami of maturing debt that funded all these expansions:

There is no magic bullet here, to pay debt down. It’s the same for every other company. That debt needs to be rolled over, most of it at junk bond rates. Or converted into equity, somehow, but you only do that if everyone has a gun pointed at their heads. Or it may be bankrupted away, unless these companies can suddenly get users of AI to pay a lot more to use the programs than they are paying now.

The companies need to pivot, and fast, away from the economic model that has underpinned this industry from the beginning. Users are not paying nearly enough for the AI services that they use, and now the investment thesis for the entire industry is threatened.

But there are strong ethical and moral questions to be raised as well. Karen Hao writes from inside the industry. She went to MIT, and knows personally a lot of the people in those companies that develop the AI. She wrote a really compelling book, and is appearing more often on podcasts. (Note to investors: if you have investments in the AI theme, you are well advised to spend some time, to ask yourself if the people running these companies conform with your values.)

Her takeaway is that, at a minimum, the people in charge of those organizations are mediocrities. They’re unimpressive. These are not, then, the people who brought us transistor or the word processor or the spreadsheet or the internet – they’re just guys who were given a lot of money to spend, but who cannot answer even basic questions about what their company even does, or what AI even is.

We’re still a long way from artificial general intelligence—machines who can learn and think on their own, and likely will never get there. And hopefully so, should say. The hundreds of billions of dollars that have already gone out the door haven’t bought much, besides answering basic questions and translating them, which are things we already had.

At best the industry has overpromised and underdelivered. But she also points out much worse: The human rights abuses. Privacy violations and labor exploitation. Human beings doing inhuman things, to make more money.

These are people she went to college with, were friends with, and she was left to wonder if the world would be a better place if these companies didn’t exist, and if the people who run them had never been born.

Now, the rest of the world is wondering that too. The Department of War has apparently outsourced missile targeting to Artificial Intelligence, and on the very first day of the War on Iran the United States committed war crimes. At least 175 people were killed, mostly kids, when a Tomahawk missile fired from the USS Spruance blew up a school. It was a “targeting mistake”; the school was once part of a complex on the military base nearby, and CENTCOM’s target coordinates came from old data.

Ten years ago, the school buildings were fenced off from the military base. The watchtowers were taken down, it was opened to the public, and the Iranians built playgrounds and athletic fields. DIA and other agencies have scores of analysts whose job is to develop target sets, using current satellite data.

“Military targeting is complex and involves multiple agencies”, and “many people are responsible to verify” the data are correct. “But in a fast-moving situation, like in the opening days of the war, the information is sometimes not verified.” We’ll stop right there and point out that these targets were selected before the war started, and everybody just mentioned so far is sitting in a safe, air-conditioned building far away. This was not a fog-of-war situation where a combat unit is taking fire from all directions and is making split-second life-or-death decisions, and collateral damage might result.

What most Americans might have expected here is that before a bomber or a missile gets sent launched toward a target, first we have a bunch of those people poring over that satellite data and make sure it really is a military base. Park a satellites over the place, watch it for a few days, then somebody might say, “is this really a rocket base? Because there’s no soldiers coming and going. It’s mostly little kids. Are we sure this isn’t a kindergarten? Because it looks a lot like a kindergarten.”

The New York Times; there’s an understatement: “one of the most devastating single military errors in decades.”

It was a war crime, and naturally everyone involved is trying to distance himself from it best he can, out of self-preservation. Here is the Miliary Times two weeks later: Democrats in the House of Representatives are asking about the Maven Smart System. Maven integrates AI and machine learning in logistics and targeting, and is embedded in CENTCOM operations. It’s built by Palantir, who got $1.3 billion from the Pentagon to handle the “information overload” problem. The system brings together satellite imagery, drone feeds, radar and SIGINT, then classified targets and generates strike packages, “compressing kill-chain reasoning and decision making into the fastest timelines ever seen.”

If this sounds to you a lot like the opening scenes from ‘The Terminator”, and that Palantir just built Skynet, you’re not alone. What’s more, Maven is bundled with Claude, from Anthropic, and the AI also writes legal briefs to justify each strike. In the first 24 hours, the system generated hundreds of targets, enabling the US to hit over a thousand targets in the first 24 hours.

Did a human being at any time verify the accuracy—or even the validity—of the target? No answer yet, and we should be shocked if anyone actually steps forward and says, yes, that was me. Because this is a war crime and a reckoning is due. And what did the American taxpayer get for our $1.3 billion? Did we get a better system? The Maven system can correctly identify targets with a 60% accuracy. Human analysts are at 84%. So, no. And Maven performs even worse in bad weather. When the Air Force ran a test with AI, it scored just 25% accuracy in real conditions. But that may have been the result of being fed the wrong information, again under battlefield conditions.

Every single word of that is terrifying, and even that 86% accuracy rate for human analysts isn’t nearly good enough. But in the case of this school in Iran, it’s hard for a rational person to understand how this could have happened. The school had a website and was searchable online. Archives of satellite photos show the school being there for at least eight years, and recent images show school buildings and playgrounds.

So where was everybody? Who was there to make sure that what was coming out of Palantir’s AI wasn’t a hallucination? To ensure that it was true? No matter; the Pentagon is full speed ahead with Maven. It’s now the official program of record and is being rolled out across all branches.

And it’s not just the War Department. Palantir is working secretly with the FAA to overhaul the air traffic control system in the United States. This was after. This is dated April. Just six weeks after the Pentagon used Palantir’s software to blow up a school full of little Iranian girls, now the FAA wants them for a “stealthy program” to redesign the civilian air travel system.

This is nowhere close to over. Investigations are coming, and we already know how they’re going to go. Every single human being involved will do anything to take the heat off himself, out of self-preservation. You can legally delegate authority, but cannot delegate responsibility, so it will fall on the people who designed that system and turned it on. And everybody, from the top brass in the command centers to the guy who pressed the launch button to the members of Congress who rubber-stamped this war without even a discussion, will try to blame the AI. Whether or not the families of the Iranian girls get the justice they deserve is an open question, but the die on this is cast.

This cannot go on for much longer. It cannot. Americans have now seen what happens when we turn over these decisions to automated systems, from Silicon Valley companies and the people who run them, and are outraged. Up until now, nobody was asking hard questions, except for just a handful of people like Ed Zitron and Karen Hao.

That’s changing, finally. The New Yorker just dropped a very critical piece on Sam Altman at OpenAI, and before that Tucker Carlson did one of the most eye-popping interviews in the history of YouTube, which we’ll just link to and leave for you to draw your own conclusions.

These are the wealthiest and most powerful people, in the world. It is Wall Street and Silicon Valley and the White House and the Department of War. Now a lot more people are thankfully paying attention, and extra credit is especially due to the ones who questioned it from the very beginning, and did so at great risk to themselves.

It’s far too late in coming, but finally people are awake, to what this industry is, and who is in charge of it. The heads of these companies are deeply distrusted, even by their own boards of directors and business partners. They are deeply disrespected, even by their own engineering teams, because they don’t even understand the science or the technology that they’re supposedly in charge of.

For everyday Americans, it was always hard to see what we were getting out of this deal. Silicon Valley companies and Wall Street take our private data, our medical histories, our browsing habits, all of our banking transactions and what we like to shop for and buy and wear and read; even our own work product. Then they jam it into one of their closed-source large language models so they can charge companies to use it, who then lay off hundreds of thousands of people.

And by the way in order to make all that work we have to agree to let them build giant new power plants, which only their data centers are allowed to use. Because according to them, we don’t need doctors anymore. Or air traffic controllers. Or military analysts who can see a school in a photograph and cancel a missile strike. We don’t even need lawyers to write up the legal briefs after the fact. Just trust those guys.

As horrifying as this is to normal Americans, we shouldn’t wonder how it all reads and sounds in Beijing. Or in the capital of any responsible country. We shouldn’t wonder if China is reconsidering, for example, their policy about sharing their citizens’ private medical data with Silicon Valley companies who want to use it to build better LLM’s in medicine, which Wall Street investors can then charge money to use. It’s out of the question.

This is Jensen Huang. Mr. Huang is CEO of Nvidia, and Nvidia builds the GPU’s and chips which in turn are used by the data centers where all those superfast searches and outputs happen. Nvidia is a $5 trillion dollar company, and Jensen Huang is not one of Karen Hao’s mediocrities who is only good at getting investors to hand over their money, and who cannot explain what his own company does. He can. He has a deep understanding of the engineering; he has a deep understanding of the science.

He also has a deep understanding of China, and so he knows he and Nvidia have a big China problem. He appeared on this podcast, and starting from one hour 3 minutes in, we can see what’s at stake for Nvidia, and the other companies in the American AI industry.

He makes repeated reference to the 5-layer AI stack. Some people call this the 5-layer cake of AI. At the bottom, the foundation, is energy. Because it takes a lot of energy. Then comes the chips, which is where his company is. On top of chips come the data centers, which need lots of chips and lots of energy. Then come those large-language models, and on top of everything is the applications layer. Applications is who is using this technology, and for what, and are those users paying enough to pay the bills of everybody else and everything else underneath them.

Jensen Huang knows that China already owns four of those layers in that stack, and Nvidia is barely hanging on in chips. So he is gravely concerned here, about what it means from now on if China’s large-language models can run on chips from Huawei (which is a Chinese semiconductor manufacturer, along with lots of other products like 5G towers and downstream products that use AI.)

China has abundant energy and an abundance of smart people, and when Chinese-built applications that are used by their billion users here and a couple billion more from across the world, and that includes all of Chinese factories and supply chain nodes and trading partners—and that all happens on a fully-integrated and fully made-in-China stack, it’s game over for the American AI industry:

But that transition is already in motion. “Delete America” is official China government policy, and it’s just what it sounds like. In 2022 regulators directed all state-owned companies in China to replace foreign software by 2027.

The regulation covers everything from finance, energy, and supply chain management, and is part of a long push for self-sufficiency in everything, including semiconductors. Jensen Huang knows what that means for his company, which is that Nvidia needs to stay so far ahead of Huawei, and the other Chinese chipmakers, that he can push further out the day that Chinese chips replace Nvidia’s across Chinese LLM’s. When those models are optimized to run on Chinese architecture, and that AI is then adopted by rest of the world, the future of tech will be all-China.

DeepSeek is a Chinese large language model. Its Version 3 ran on old Nvidia chips, using old Hopper system architecture. DeepSeek v4 was just released, and the first reports are that it does run on Huawei Ascend chips, and they’re already taking orders for hundreds of thousands of units. Two more models are coming, also built to run on Chinese semiconductors.

That leaves Huang to explain what the problem is: Everyone assumes that Nvidia chips just perform so much better, and that Nvidia’s tech is so far ahead, that Huawei chips will just run those models less well. He insists that’s not true. China can just use more of those chips, stacked differently, because electricity in China is basically free, which means the cost of compute is far below the bills the Silicon Valley companies run.

Abundant energy is the first layer in that stack. China has it, and the United States and Europe do not. If AI data centers were one country, it would be the fifth largest consumer of energy in the world. The United States is 45% of that consumption, which will more than double by 2030. So that is the equivalent of adding an entire Canada worth of power consumption to the American grid, just for the data centers.

That’s not going to happen, anyway—the pushback is already severe, and the data centers that were supposed to be finished last year—2025—still aren’t done, and much of the capacity that was supposed to come online this year have not even begun construction:

The centers are not being built, and that means that the power will not ever be there to turn on all those machines that companies have already ordered from Nvidia.

Those energy bottlenecks do not exist here in China; they do not even register as a concern in this discussion.

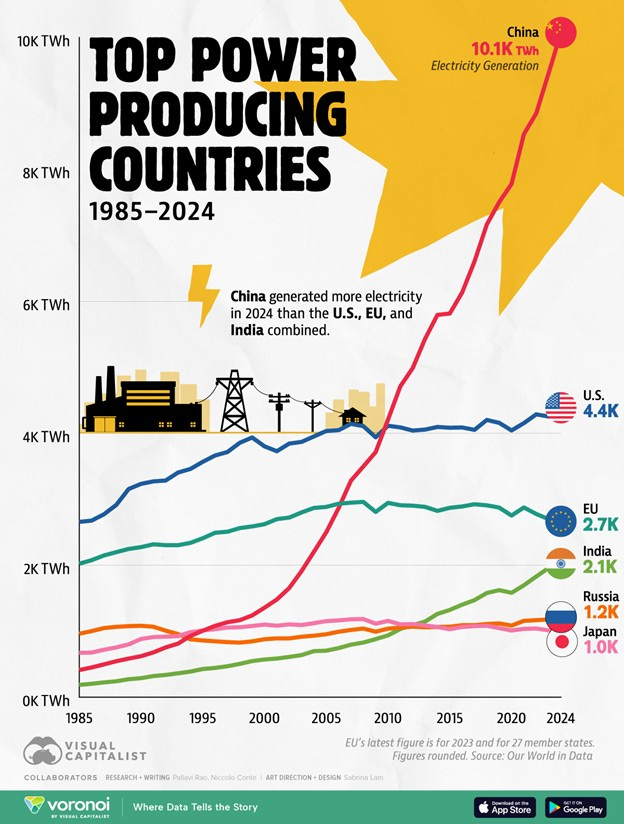

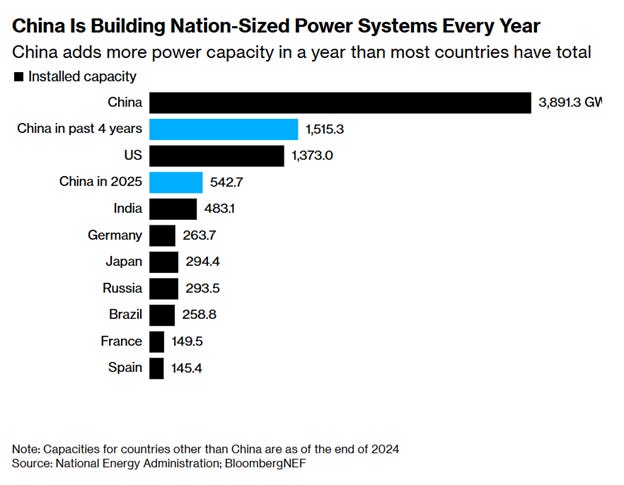

In 2024, China generated more power than the United States, the European Union, and India combined. In the US, power generation rose slightly over the past 25 years, from just under 4 thousand terawatt hours to just over 4,000. The EU dropped slightly. In that same time China went up over 10x. In just four years, China built more power capacity that the entire United States did, in over a hundred years. In a single year China ADDS more power than most countries produce:

Infrastructure is 5G towers and data centers, mainly. And data centers run on chips, which come from Nvidia and Huawei and others. There are 561 data centers in operation in China, right now, and surprisingly many of them were built and operated by the Silicon Valley hyperscalers. And in more bad news for Nvidia, the newest ones are running on Chinese semiconductors instead of Nvidia’s. Alibaba also builds chips, in addition to building the Qwen Large Language Model, in addition to running the biggest B2B exchange in the world for manufactured products.

And while data centers in the United States and Europe are not getting built at all, in China they’ve done for data centers in AI what they do in every other industry: they overbuild, to create excess capacity today in anticipation of future demand, instead of in response to current demand. So, in China they’ve got the exact opposite of the conditions that exist in North America. Now there is so much capacity, so many chips, that the market is falling apart. Just a year ago an Nvidia H100 chip would go for $28,000 on the black market, and today the price has fallen so much they’re not getting bids at all. The costs are plunging, and so are the profits.

Even recently that was a big business, buying and renting high-priced GPU’s from Nvidia for the Chinese large language models to run on. But that industry is dead. And the main driver of why, is that the Chinese LLM’s just run differently, than the ones from Silicon Valley. The newest Chinese models are optimized for computing power, which means the need for the high-performance, but high-energy-consumption chips from Nvidia goes away. Rental costs for those GPU’s have dropped by more than half and are now at an all-time low. Nvidia chips are just more expensive to run, while the prices paid by end users have fallen, so data centers running Nvidia can’t even pay their electric bills, even though the electricity in China is practically free.

It was DeepSeek that transformed the industry’s approach to that problem, and blew up the math on how much it costs to develop the newest and best AI systems. It also cast doubt on all that CAPEX by Silicon Valley hyperscalers. This is a strong summary of the steps involved in developing a new LLM, and where the costs come from.

What DeepSeek did was upend the trend of just throwing more money at compute. In DeepSeek’s financials they claim it cost under $6 million to train their Version 3, which doesn’t include some major costs, but the comparable costs to train US models are many times higher. DeepSeek did it by redesigning the network architecture of the system itself. The company did NOT have access to the best chips, and that forced them to innovate, and it resulted in a product that was completely different, while also costing much less to run.

What’s more, DeepSeek is open-source, and was quickly adopted by developers across the world with few restrictions. This paper compares apples to apples, the DeepSeek, and ChatGPT, and Gemini, and explains that these crazy cost differences derive from hyper efficient algorithms and cloud systems, and overall better resource optimization.

This group believes that the total development cost of DeepSeek is probably over $500 million, but the overall efficiencies of the DeepSeek model will immediately be copied by Western labs. It was a quantum jump in capability, and they conclude that it doesn’t really even matter much how much it cost to build DeepSeek, it’s just a much better model given the cost inputs required to generate results.

Kimi is an LLM from Moonshot AI, and developers that previously were using proprietary API’s–from Anthropic or OpenAI, probably–are migrating over to Kimi. It is already foundational to AI systems. “It’s becoming infrastructure.”

So Chinese large language models are taking over, because they are at least as good as the ones that come out of Silicon Valley, and cost far less to run. And Nvidia’s worst nightmare is already here, because those LLM’s run on chips that come from China, and so nobody here needs Nvidia chips anymore and the prices are collapsing. So Jason Huang has a lot of things to worry about, along with investors who have big bets on US firms in the AI stack.

The application layer is everything, by far the most important. That’s users. It’s companies and creators and factories and managers and engineering teams – who use these tools to do their jobs better. The application layer was always going to be China, anyway, by default. Because China is where the factories are. All the world’s supply chains run through here, and global logistics. The BRICS bloc and the Global Majority countries have built a financial system outside of SWIFT and Western banks and regulators, and the US dollar, to handle all that trade. And remember again that it is the official policy of the Chinese government, that not a single piece of software from the United States will be used to run any of it.

This industry, the AI Industry, is not going away. It’s already too important, already a key driver to productivity in manufacturing, and supply chain, and in medicine and in IoT and in clean energy and in transportation and logistics and shipping and finance. It will be critical in everything, because it’s such a valuable tool in the hands of people who are using it ethically, and who are watching over the results carefully.

But the industry will go away from the United States, because our companies were set up to enrich and empower only themselves, instead of their users, and all under the pretense that their AI was smart and good enough to replace smart people and good people. But it never was.

Be Good.

Resources and links:

The Stargate Project: Trump and OpenAI announce $500 billion AI venture

https://mashable.com/article/stargate-project-trump-openai-oracle-softbank-500-billion-venture-ai-infrastructure

Nuclear Powered Data Centers: Microsoft Bets on SMRs to Fuel the Cloud

https://www.captechu.edu/blog/nuclear-powered-data-centers-microsoft-bets-smrs-fuel-cloud

Meta signs 3 deals for nuclear energy to power AI data centers

https://www.cbsnews.com/news/meta-nuclear-power-deals-ai-data-centers/

How China Won the Open-Source LLM Race — and Why It Matters

[ This Week in AI

This Week in AI

How China Won the Open-Source LLM Race — and Why It Matters

If you were trying to “get into AI” in early January 2025, you weren’t alone. This newsletter itself was born out of that moment…

Read more

4 months ago · 25 likes · 5 comments · This Week in AI](https://thisweekinaiclub.substack.com/p/how-china-won-the-open-source-llm)

Dario Amodei Doubled Down On His AI Jobs Warning. Here’s What’s Different Now

https://www.forbes.com/sites/kolawolesamueladebayo/2026/02/21/dario-amodei-doubled-down-on-his-ai-jobs-warning-heres-whats-different-now/

Sam Altman May Control Our Future—Can He Be Trusted?

https://www.newyorker.com/magazine/2026/04/13/sam-altman-may-control-our-future-can-he-be-trusted

Energy demand from AI

https://www.iea.org/reports/energy-and-ai/energy-demand-from-ai

Nvidia’s Jensen Huang warns Huawei chips for DeepSeek AI models would be ‘horrible’ for US

https://www.scmp.com/tech/article/3350460/nvidias-jensen-huang-warns-huawei-chips-deepseek-ai-models-would-be-horrible-us

YouTube, Dwarkesh Patel and Jensen Huang – Will Nvidia’s moat persist?

Exclusive: DeepSeek’s upcoming new AI model will be able to run on Huawei chips, a major milestone in China’s quest for semiconductor self-sufficiency.

https://www.reuters.com/world/china/deepseeks-v4-model-will-run-huawei-chips-information-reports-2026-04-03/

DeepSeek’s V4 model will run on Huawei chips, The Information reports

DeepSeek’s New AI Model Will Be a Victory for Huawei

https://www.theinformation.com/articles/deepseeks-new-ai-model-will-victory-huawei

China Added 543 Gigawatts in New Power Capacity in 2025

https://oilprice.com/Latest-Energy-News/World-News/China-Added-543-Gigawatts-in-New-Power-Capacity-in-2025.html

China’s Four-Year Energy Spree Has Eclipsed Entire US Power Grid

https://www.bloomberg.com/news/articles/2026-01-28/china-s-four-year-energy-spree-has-eclipsed-entire-us-power-grid

Top power-generating countries in 2024

https://www.globaltimes.cn/page/202506/1335418.shtml

Ranked: Top Countries by Annual Electricity Production (1985–2024)

https://www.visualcapitalist.com/ranked-top-countries-by-annual-electricity-production-1985-2024/

I Spent 2 Months Building on Kimi K2 — It’s Quietly Becoming AI’s Open-Source Backbone

https://medium.com/@mohit15856/i-spent-2-months-building-on-kimi-k2-its-quietly-becoming-ai-s-open-source-backbone-d1e0cd3d885c

Development Cost Data + Statistics Comparison: DeepSeek R1’s $5.6M vs. ChatGPT-4 and Google Gemini Ultra

https://softwareoasis.com/development-cost-comparison/

DeepSeek Debates: Chinese Leadership On Cost, True Training Cost, Closed Model Margin Impacts

[ SemiAnalysis

SemiAnalysis

DeepSeek Debates: Chinese Leadership On Cost, True Training Cost, Closed Model Margin Impacts

The DeepSeek Narrative Takes the World by Storm…

Read more

a year ago · 2 likes · Dylan Patel, AJ Kourabi, Doug, and Reyk Knuhtsen](https://newsletter.semianalysis.com/p/deepseek-debates)

Why building big AIs costs billions – and how Chinese startup DeepSeek dramatically changed the calculus

https://theconversation.com/why-building-big-ais-costs-billions-and-how-chinese-startup-deepseek-dramatically-changed-the-calculus-248431

DeepSeek’s hardware spend could be as high as $500 million, new report estimates

https://www.cnbc.com/2025/01/31/deepseeks-hardware-spend-could-be-as-high-as-500-million-report.html

Alibaba-backed Moonshot releases its second AI update in four months as China’s AI race heats up

https://www.cnbc.com/2025/11/06/alibaba-backed-moonshot-releases-new-ai-model-kimi-k2-thinking.html

Millions of Americans Are Talking to AI Instead of Going to the Doctor, and It’s Giving Them Horrendously Flawed Medical Advice

https://futurism.com/artificial-intelligence/millions-americans-ai-instead-doctor-bad-advice

Large Language Model Performance and Clinical Reasoning Tasks

https://jamanetwork.com/journals/jamanetworkopen/fullarticle/2847679?utm\_campaign=articlePDF

There Are Signs of a Massive AI Backlash

https://futurism.com/artificial-intelligence/signs-massive-ai-backlash

Global energy demands within the AI regulatory landscape

https://www.brookings.edu/articles/global-energy-demands-within-the-ai-regulatory-landscape/

Energy Markets Race to Solve the AI Power Bottleneck

https://www.morganstanley.com/insights/articles/powering-ai-energy-market-outlook-2026

Military Times, Deadly Iran school strike casts shadow over Pentagon’s AI targeting push

https://www.militarytimes.com/news/your-military/2026/03/24/deadly-iran-school-strike-casts-shadow-over-pentagons-ai-targeting-push/

The Growing Problem of Radiologist Shortage: China’s Perspective

https://www.kjronline.org/pdf/10.3348/kjr.2023.0839

AI in Chinese healthcare: From medical imaging to AI hospitals

https://daxueconsulting.com/ai-healthcare-china/

New York Times, U.S. at Fault in Strike on School in Iran, Preliminary Inquiry Says

https://www.nytimes.com/2026/03/11/us/politics/iran-school-missile-strike.html

China is already a powerhouse in AI for radiology and medical imaging. Next they’re going global.

The Nobel Prize, Geoffrey Hinton

https://www.nobelprize.org/prizes/physics/2024/hinton/facts/

Ed Zitron’s Where’s Your Ed At

Karen Hao on AI tech bosses: ‘Many choose not to have children because they don’t think the world is going to be around much longer’

https://www.irishtimes.com/culture/books/2025/08/09/karen-hao-on-ai-tech-bosses-many-choose-not-to-have-children-because-they-dont-think-the-world-is-going-to-be-around-much-longer/

Maven Smart System

https://www.missiledefenseadvocacy.org/maven-smart-system/

Bombed Iranian girls school had vivid website and yearslong online presence

https://www.reuters.com/investigations/bombed-iranian-girls-school-had-vivid-website-yearslong-online-presence-2026-03-12/

FAA quietly developing AI-enabled air traffic management system

https://theaircurrent.com/air-traffic-control/faa-smart-ai-predictive-air-traffic-management-system-palantir-thales/

Wall Street Journal, China Intensifies Push to ‘Delete America’ From Its Technology

https://www.wsj.com/world/china/china-technology-software-delete-america-2b8ea89f

Sanctions against Huawei fail, then birth “Delete America” campaign across China’s supply chains

China Data Center Locations (561)

https://www.datacenters.com/locations/china

Alibaba launches data center with 10,000 of its own chips as China ramps up AI push

https://www.cnbc.com/2026/04/08/china-alibaba-data-center-ai-chips-zhenwu.html

China built hundreds of AI data centers to catch the AI boom. Now many stand unused.

https://www.technologyreview.com/2025/03/26/1113802/china-ai-data-centers-unused

AI Whistleblower: We Are Being Gaslit By AI Companies, They’re Hiding The Truth! - Karen Hao

Silicon Valley Insider EXPOSES Cult-Like AI Companies | Aaron Bastani Meets Karen Hao

“The problem is Sam Altman”: OpenAI insiders don’t trust CEO

https://arstechnica.com/tech-policy/2026/04/the-problem-is-sam-altman-openai-insiders-dont-trust-ceo/

Nearly half of US data centers planned for 2026 are facing delays or cancellation

https://www.techspot.com/news/111947-nearly-half-us-data-centers-planned-2026-facing.html

YouTube, Tucker Carlson, Sam Altman on God, Elon Mu