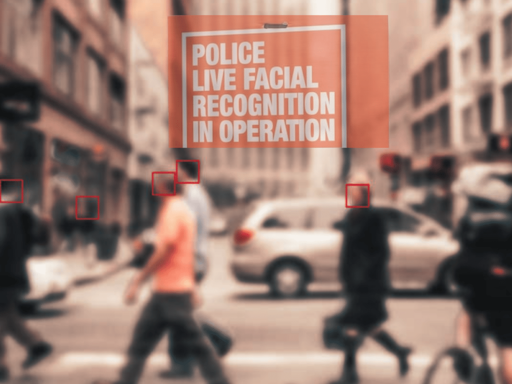

The UK can now roll out a racist surveillance measure that had been delayed by a legal challenge filed by anti-facial recognition campaigners. The appeal has now been lost. Met commissioner Mark Rowley has once again praised facial recognition measures. He believes that the technology helps to catch criminal, while civil liberties groups are not quite so sure…

Imminent roll out

Rowley said facial recognition technology would:

help us catch more criminals quickly and precisely, saves officer time, and ultimately saves money.

And police minister Sarah Jones, channeling every authoritarian since time began, says only the guilty should be fearful…

I welcome today’s ruling because there can be no true liberty when people live in fear of crime in their communities.

Live facial recognition only locates specifically wanted people — law abiding citizens have nothing to fear.

Jones’ bizarrely inferred that criticism from people challenging the excessive deployment of facial recognition technology was unwarranted, saying:

This technology puts dangerous rapists and murderers behind bars — and I question any group who call that uncivil.

We are rolling out facial recognition across the country with record investment to keep communities safe.

A bit of a reach, minister…

Lost appeal ushers in surveillance state

Two concerned citizens had challenged the roll-out in the courts:

Youth worker Shaun Thompson, and Silkie Carlo, director of campaign group Big Brother Watch, brought the challenge over concerns that facial recognition could be used arbitrarily or in a discriminatory way.

The pair had argued that the use of the van-mounted technology:

breaches the right to privacy outlined in the European Convention of Human Rights.

Judges presiding over the case ruled:

We are not able to accept, on the thin submissions advanced before us, that concerns about discrimination infect the legality of the policy.

The government and police claim that the technology has resulted in few mistakes. However, Thompson said he had been misidentified by facial recognition:

No one should be treated like a criminal due to a computer error, I was compliant with the police, but my bank cards and passport weren’t enough to convince the police the facial recognition tech was wrong.

He likened its reliability to:

stop-and-search on steroids.

For several years now, the Canary has been covering the risks of facial recognition. On 1 November 2025, we shed light on the ways in which AI-integrated facial recognition is inherently racist, noting that the:

UN’s office for human rights, as well as anthropologists and tech experts, have long known that AI systems are inherently racist, either by design or through the biases of their creators — but the police facial recognition systems are going above and beyond in the service of racist discrimination.

Facial technology’s race bias

Examples include the case of the appeal claimant in the latest ruling, Shaun Thompson. The other claimant, civil liberties NGO Big Brother Watch, warned in the same month that cops were feeding passport photos into their AI.

Further evidence of the racialised use of AI facial recognition emerged on 15 September 2025. Police used the technology at Notting Hill Carnival—an annual celebration of black British culture—but not at the fascist-organised Unite the Kingdom event.

British securocrats have gotten their way today. Those of us who care about basic, hard-won freedoms must continue to challenge the UK’s bipartisan authoritarianism wherever we can.

Featured image via Unsplash/ the Canary

By Joe Glenton

From Canary via This RSS Feed.