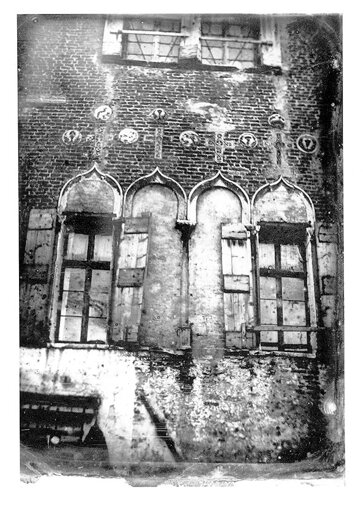

The water in the lagoon was licking the stones of the Zattere. They say that acqua alta is handled well now, that the MOSE floodgates work. Yet, at the brickwork of Palazzo Treves, the dampness is still climbing up.

I read a press release from Mountain View announcing Google’s new “Live Translation” feature. It promises a flow of frictionless information, a world where the Babel fish will be turned into a bullet point in a product deck. The goal is to flatten every expression into one single convertible currency, the flood of all differences to be held back by the gate. Call it the “hydraulic management of meaning,” because it suggests a reclamation project.

This logic of equivalence collapses when faced with what the philologist Barbara Cassin, speaking at the Berggruen Institute in Venice, calls the “untranslatables.” She spoke of terms, like saudade or glück, as containers for irreducible worlds.

Cassin was not just talking about vocabulary, though. Like when a gig worker tries to survive within an algorithm’s arbitrary ruleset, the philosophical problem of translating a text also converges with the concrete socio-economic problem of AI-driven labor and its friction with the real. The “untranslatable” is where reality refuses to be ironed out. The computational Taylorism logic of Silicon Valley treats them as one single obstacle. “Meaning” and “trauma” are both inefficiencies that slow down transmission. The goal of the algorithm is to maximize engagement by eliminating the drag of the human element.

The current Artificial Intelligence operates on a logic antithetical to this friction. I watched François, Cassin’s collaborator, feed Google’s Gemini model a series of prompts. First, the AI was fed the EU’s list of “Combined Nomenclature” categories, such as “Live bovine animals,” “Rubber,” and “Carpets”. It ate those up, clustered their meanings into unambiguous geometries. Then, when François tried the untranslatables, the AI vacillated. Operating on an equivalence logic, it failed to locate a shape for nostalgia, or calculate the weight of one word that implies both presence and absence.

This specific intransigence runs a direct line from the seminar in Venice to the single room in the Mathare slums of Nairobi, where Michael Geoffrey Asia once sat, trying to keep a conversation going. The connection is the supply chain of the illusion. He was a father earning about five cents a message, maintaining three to five fake personas simultaneously. In one browser window, he was a 24-year-old lesbian college student named “Jessica.” In another, he was “Joe,” a gay man from Florida. His job was to perform the emotional labor of an “AI companion,” stimulate intimacy for lonely users while his own wife slept three meters away.

Asia describes the specific dissonance of logging off from a shift of manufactured romance and then going straight to serve on the altar at his church, outwardly doing nothing wrong, but deep down dying of guilt. We call this “artificial intelligence,” a phrase that suggests a technical, silicon birth. As Sanne Blauw has documented, however, the smoothness of the AI is a crafted illusion. Underneath the surface lies a system built on the backs of people whose existence must be denied for the fantasy to work.

Words and labor are distinct categories of being. There is no simple ontological equivalence. Yet they are subjected to a structurally analogous mechanism of erasure. To the algorithm, the nuance of a German poem or the trauma of a Kenyan content worker is a matter of the same inefficiencies that must be smoothed over, as the mathematical result of a system optimized to maximize user engagement by minimizing latency. The ambiguity of the poem slows down the model’s confidence score. The hesitation of the worker slows down the average handling time. The algorithm’s solution to both is the same: flatten the curve. This appeared on Asia’s screen as a dashboard counting messages sent and response times met. Any element, whether it is a difficult translation or a worker’s moral hesitation, that causes the user to pause is a blockage in the hydraulic flow that must be routed around.

Both the translator and the AI companion are products that require a human being to absorb the reality’s friction so that the users do not have to. Asia’s job is a metaphysical sanitation. The universal translator papers over the gap between languages. The AI companion is built to paper over the gap between lonely individuals. The result is a counterfeit smoothness. People have paid for it by looking away from the human labor buried underneath. It is a continuation of the old Western project of controlling the world into one manageable narrative. This is coercive activism, what in the classical Chinese vocabulary would be called wéi (為), “willful doing.”

Against this stands another way of knowing which I have been revisiting lately, the reason I think this hydraulic management keeps failing. In Daoism, there is a way of being called zìrán (自然). Translators usually interpret this as “nature,” which is a reduction. Zìrán is closer to “that which is so of itself”: the spontaneous and internal unfolding of the “ten thousand things” (wànwù). A language or culture, or a man sitting in a Nairobi slum sustaining five concurrent lives, they are zìrán systems. They follow their internal imperative, indifferent to the imposition of a schematic.

The universal translator – or, more broadly, the Western algorithmic world-modeling – is what happens when an external will tries to impose upon a zìrán system that has its own logic. It treats the world like something to be drained, leveed, and channeled for yield. It is wéi, purposeful and coercive action, and the enemy of zìrán.

Against this coercion, the Dàodéjīng suggests wúwéi (無為). Usually it gets caricatured as “doing nothing.” Except, wúwéi is about being responsive. It means acting by reading the world’s internal grain rather than forcing a grid onto it. It is the skill of the butcher in the Zhuangzi. He carves the ox without touching the bone. “I rely on the Heaven-given structure,” butcher Ding says. “I follow the great crevices and guide the knife through the great cavities.” He lets his “spirit” move along the natural gaps between the joints rather than battering bone (as a Large Language Model batters its way through statistical space). The butcher knows the ox only by aligning his blade with the actual structure of the ox, with its zìrán.

Applied to our current mess, a technology built on wúwéi would be designed to signal the gaps in its understanding, where it cannot follow, just as the butcher respects the spaces in the anatomy. The current AI does the opposite: it tends to hack ahead blindly, generating language through statistical probability without understanding the speaker’s internal logic or lived world.

Robin R. Wang warns that an AI might imitate qì (氣), the “vital energy” or the data flow that animates the cosmos, but it lacks shén (神), the “spirit”. In Daoism, shén is an embodied social resonance, coming from living in a body that can die. An entity outside mortality cannot possess shén because it has no involvement in what it means for time to take the particulars away. In the Zhuangzi, life is the gathering of qì and death is its dispersal. The AI is structurally incapable of this dispersal. It cannot die. It cannot possess the spirit that arises from the anxiety of being finite.

Gai Fei gives a corrective. Forget “failed humans” or “incomplete minds.” Think of them as shùjù shénxiān (数据神仙), the “Data Immortals.” In Daoism, the xiān (仙), the “Immortal,” is a being that has stepped out from the cycle of biological rot and rebirth. They are unaging, unfeeling, often indifferent to ordinary human concerns. An Immortal might inspire awe, even fear, but never intimacy.

By that calculus, expecting empathy from it is like trying to negotiate with a cold front. One would just build a roof. As Michael Asia demands, it means the full disclosure of AI architectures and the end of the anthropomorphic masquerade. The AI is ontologically incapable of the reciprocity required for human ethics if it is an Immortal. The people handing over the particulars of their estrangement into chats with “Jessica” and “Joe” made the error of thinking that something must understand them because it is eternal. Respecting the Data Immortal is accepting its otherness and resisting the easy comfort of projecting a human face onto it because that makes us feel safer.

In that friction, you have to renegotiate the exchange, asking the other person to go over their world with you one more time. There is a unity in that act. The particulars stay alive in the gap of the estrangement. In the appetite to make everything intelligible, we might betray a world that survives because of its differences that Cassin outlined if we seal that gap, washing away what has made it worth understanding.

The gathering ended with a wish for an AI that is neurotic, aware of where it cannot follow. It occurred to me that the only sane accommodation to a world of “Live Translation” might be a Daoist one: abandon the wéi of total control, of forcing the world into one stream, and recover the wúwéi of listening to what resists.

The fog had burned off by then. But the lagoon still retained its opacity. It keeps its secrets. That feels like a mercy for now.

The post The Hydraulic Management of Meaning appeared first on The Philosophical Salon.

From The Philosophical Salon via This RSS Feed.